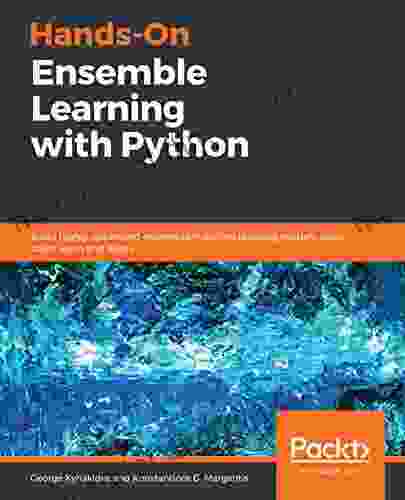

Build Highly Optimized Ensemble Machine Learning Models Using Scikit Learn And

Machine learning has become an integral part of our lives. From the recommendations we see on Netflix to the spam filters in our email, machine learning is making our lives easier and more efficient.

One of the most powerful machine learning techniques is ensemble learning. Ensemble methods combine multiple weak learners to create a single strong learner. This can be a very effective way to improve the accuracy and robustness of machine learning models.

In this article, we will discuss how to build highly optimized ensemble machine learning models using Scikit Learn. Scikit Learn is a popular Python library that provides a wide range of machine learning algorithms and tools.

4.5 out of 5

| Language | : | English |

| File size | : | 9361 KB |

| Text-to-Speech | : | Enabled |

| Screen Reader | : | Supported |

| Enhanced typesetting | : | Enabled |

| Print length | : | 298 pages |

Machine learning is a subfield of artificial intelligence that gives computers the ability to learn without being explicitly programmed. Machine learning algorithms are trained on data, and then they can make predictions on new data.

There are many different types of machine learning algorithms, but they can be broadly divided into two categories: supervised learning and unsupervised learning.

- Supervised learning algorithms are trained on labeled data. This means that the data has been annotated with the correct answer. For example, a supervised learning algorithm could be trained to identify cats and dogs by being shown a set of images of cats and dogs, each of which has been labeled as "cat" or "dog".

- Unsupervised learning algorithms are trained on unlabeled data. This means that the data has not been annotated with the correct answer. For example, an unsupervised learning algorithm could be trained to cluster a set of data points into different groups, without being told what the groups represent.

Ensemble methods are a type of machine learning algorithm that combines multiple weak learners to create a single strong learner. Weak learners are typically simple models, such as decision trees or linear regression models. The idea behind ensemble methods is that by combining multiple weak learners, we can create a model that is more accurate and robust than any of the individual weak learners.

There are many different types of ensemble methods, but the most common are:

- Bagging (short for bootstrap aggregating) is a type of ensemble method that creates multiple bootstrap samples of the training data. Each bootstrap sample is then used to train a weak learner. The predictions of the weak learners are then combined to make a final prediction.

- Boosting is a type of ensemble method that trains weak learners sequentially. Each weak learner is trained on a weighted version of the training data, where the weights are assigned based on the performance of the previous weak learners. The predictions of the weak learners are then combined to make a final prediction.

- Stacking is a type of ensemble method that combines multiple weak learners into a single model. The weak learners are typically trained on different subsets of the training data. The predictions of the weak learners are then used to train a meta-learner, which is a final model that makes the final prediction.

Scikit Learn is a popular Python library that provides a wide range of machine learning algorithms and tools. Scikit Learn includes a number of ensemble methods, including bagging, boosting, and stacking.

To use Scikit Learn for ensemble learning, you can simply import the appropriate ensemble method and then follow the instructions in the documentation. For example, to create a bagging ensemble, you would use the following code:

python from sklearn.ensemble import BaggingClassifier

Create a bagging classifier

clf = BaggingClassifier(n_estimators=10)

Train the classifier

clf.fit(X_train, y_train)

Predict the labels of the test data

y_pred = clf.predict(X_test)

There are a number of different ways to optimize ensemble models. Some of the most common techniques include:

- Hyperparameter tuning is the process of finding the optimal values for the hyperparameters of an ensemble model. Hyperparameters are parameters that control the behavior of the model, such as the number of weak learners or the learning rate.

- Feature selection is the process of selecting the most informative features to use for training the ensemble model. This can help to improve the accuracy and efficiency of the model.

- Data augmentation is the process of creating new training data by applying transformations to the existing data. This can help to improve the robustness of the model and to prevent overfitting.

In this section, we will present a case study on how to build a highly optimized ensemble machine learning model using Scikit Learn. We will use the Wisconsin Breast Cancer Dataset, which is a dataset of 569 breast cancer cases. The goal is to build a model that can predict whether a patient has breast cancer or not.

We will use a bagging ensemble of decision trees to build our model. We will first hyperparameter tune the model to find the optimal number of trees and the optimal learning rate. We will then select the most informative features to use for training the model. Finally, we will augment the training data by applying transformations to the existing data.

After optimizing our model, we will evaluate its performance on the test data. We will calculate the accuracy, precision, recall, and F1-score of the model. We will also plot the ROC curve and the AUC score of the model.

Ensemble methods are a powerful machine learning technique that can be used to improve the accuracy and robustness of machine learning models. Scikit Learn is a popular Python library that provides a wide range of ensemble methods. By using Scikit Learn, you can easily build and optimize ensemble models for a variety of different machine learning tasks.

4.5 out of 5

| Language | : | English |

| File size | : | 9361 KB |

| Text-to-Speech | : | Enabled |

| Screen Reader | : | Supported |

| Enhanced typesetting | : | Enabled |

| Print length | : | 298 pages |

Do you want to contribute by writing guest posts on this blog?

Please contact us and send us a resume of previous articles that you have written.

Book

Book Novel

Novel Page

Page Chapter

Chapter Text

Text Story

Story Genre

Genre Reader

Reader Library

Library Paperback

Paperback E-book

E-book Magazine

Magazine Newspaper

Newspaper Paragraph

Paragraph Sentence

Sentence Bookmark

Bookmark Shelf

Shelf Glossary

Glossary Bibliography

Bibliography Foreword

Foreword Preface

Preface Synopsis

Synopsis Annotation

Annotation Footnote

Footnote Manuscript

Manuscript Scroll

Scroll Codex

Codex Tome

Tome Bestseller

Bestseller Classics

Classics Library card

Library card Narrative

Narrative Biography

Biography Autobiography

Autobiography Memoir

Memoir Reference

Reference Encyclopedia

Encyclopedia Christopher Danielson

Christopher Danielson Gianrico Carofiglio

Gianrico Carofiglio Terry Grosz

Terry Grosz Chelsea Luna

Chelsea Luna Ramzi Mansour

Ramzi Mansour Charlotte Owen

Charlotte Owen George Packer

George Packer Steffen Krumm

Steffen Krumm Greg Warburton

Greg Warburton Shannon Takaoka

Shannon Takaoka Trevor Atkins

Trevor Atkins Christie Pearce Rampone

Christie Pearce Rampone Chris Buono

Chris Buono Chris Priestley

Chris Priestley Chris Highland

Chris Highland Leigh Red

Leigh Red Kana Tucker

Kana Tucker Patrick Moran

Patrick Moran Charlotte S Payne

Charlotte S Payne Richard Tames

Richard Tames

Light bulbAdvertise smarter! Our strategic ad space ensures maximum exposure. Reserve your spot today!

Felix HayesRemember The Farm: A Haunting and Heartfelt Journey Through the Labyrinth of...

Felix HayesRemember The Farm: A Haunting and Heartfelt Journey Through the Labyrinth of... Shane BlairFollow ·4.7k

Shane BlairFollow ·4.7k Shawn ReedFollow ·4.3k

Shawn ReedFollow ·4.3k Matt ReedFollow ·16.8k

Matt ReedFollow ·16.8k Jon ReedFollow ·12.8k

Jon ReedFollow ·12.8k Rubén DaríoFollow ·12.5k

Rubén DaríoFollow ·12.5k Nick TurnerFollow ·15.7k

Nick TurnerFollow ·15.7k Joseph ConradFollow ·16.5k

Joseph ConradFollow ·16.5k Adrien BlairFollow ·8k

Adrien BlairFollow ·8k

Frank Mitchell

Frank MitchellStep Onto the Dance Floor of Spanish Fluency with...

Are you ready to take a...

Jarrett Blair

Jarrett BlairEscape into the Enchanting Realm of "The British Empire...

Embark on an Extraordinary Literary Journey...

Gregory Woods

Gregory WoodsHitler Olympics: The 1936 Berlin Olympic Games

The 1936 Berlin Olympic Games...

Philip Bell

Philip BellThe British Empire of Magic and the Dark Knights King: An...

In the tapestry of literary...

Jacob Hayes

Jacob HayesPerilous Journey of Danger and Mayhem: A Thrilling...

In the untamed wilderness,...

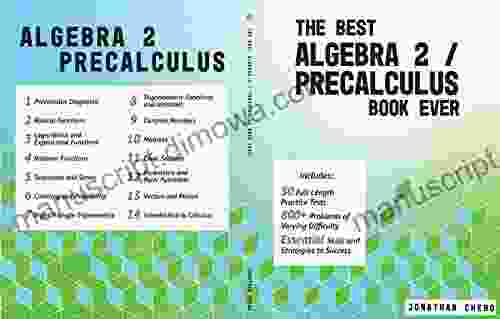

4.5 out of 5

| Language | : | English |

| File size | : | 9361 KB |

| Text-to-Speech | : | Enabled |

| Screen Reader | : | Supported |

| Enhanced typesetting | : | Enabled |

| Print length | : | 298 pages |